Benchmark and Dataset

We build the world's first large-scale gigapixel-level video dataset PANDA (gigaPixel-level humANcentric viDeo dAtaset), to promote large-scale, long-range, multi-target visual analysis centered on human behavior. The video and image data in the PANDA dataset are collected using gigapixel cameras, which can guarantee both a wide field of view (up to 1 square kilometer of natural scenes, which can contain up to 4,000 people) and high resolution (close to 1 billion pixels per frame of video). The PANDA dataset provides hand-labeled large-scale high-quality multi-level annotation, including bounding boxes with detailed attributes (such as human posture and vehicle type) on people and vehicle objects. PANDA dataset serves as a standardized evaluation benchmark for object detection, multiple object tracking, pedestrian trajectory prediction and interaction-aware human group detection.

Paper

J. Zhang, J. Zhang, S. Mao, M. Ji, G. Wang, Z. Chen, T. Zhang, X. Yuan, Q. Dai and L. Fang,

IEEE Trans. on Pattern Analysis and Machine Intelligence (TPAMI), Sep. 2021.

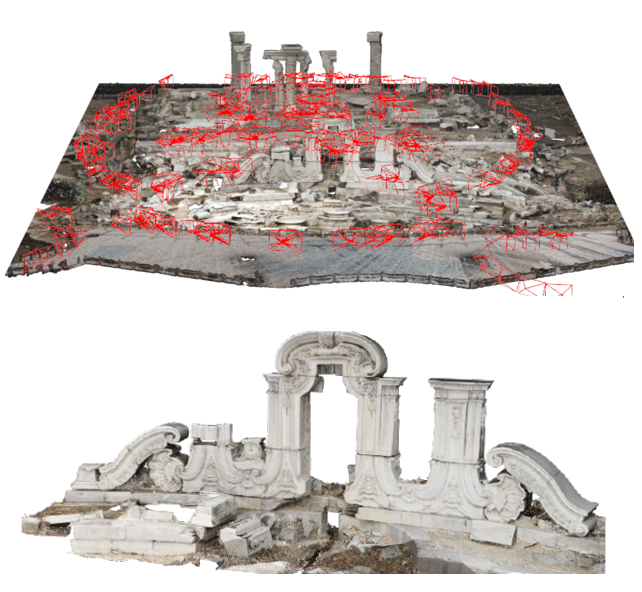

Multiview stereopsis (MVS) methods, which can reconstruct both the 3D geometry and texture from multiple images, have been rapidly developed and extensively investigated from the feature engineering methods to the data-driven ones. However, there is no dataset containing both the 3D geometry of large-scale scenes and high-resolution observations of small details to benchmark the algorithms. To this end, we present GigaMVS, the first gigapixel-image-based 3D reconstruction benchmark for ultra-large-scale scenes. The gigapixel images, with both wide field-of-view and high-resolution details, can clearly observe both the Palace-scale scene structure and Relievo-scale local details. The ground-truth geometry is captured by the laser scanner, which covers ultra-large-scale scenes with an average area of 8667 m^2 and a maximum area of 32007 m^2. Due to the extremely large scale, complex occlusion, and gigapixel-level images, GigaMVS brings the problem to light that emerged from the poor effectiveness and efficiency of the existing MVS algorithms. We thoroughly investigate the state-of-the-art methods in terms of geometric and textural measurements, which point to the weakness of existing methods and promising opportunities for future works. We believe that GigaMVS can benefit the community of 3D reconstruction and support the development of novel algorithms balancing robustness, scalability, and accuracy.

Latex Bibtex Citation:

@ARTICLE{9547729,

author={Zhang, Jianing and Zhang, Jinzhi and Mao, Shi and Ji, Mengqi and Wang, Guangyu and Chen, Zequn and Zhang, Tian and Yuan, Xiaoyun and Dai, Qionghai and Fang, Lu},

journal={IEEE Transactions on Pattern Analysis and Machine Intelligence},

title={GigaMVS: A Benchmark for Ultra-large-scale Gigapixel-level 3D Reconstruction},

year={2021},

volume={},

number={},

pages={1-1},

doi={10.1109/TPAMI.2021.3115028}}

M. Ji, J. Zhang, Q. Dai and L. Fang,

IEEE Trans. on Pattern Analysis and Machine Intelligence (TPAMI), 2020.

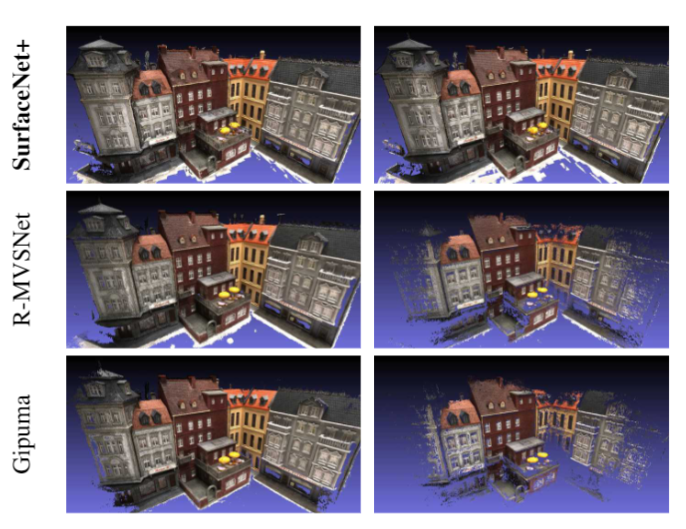

Multi-view stereopsis (MVS) tries to recover the 3D model from 2D images. As the observations become sparser, the significant 3D information loss makes the MVS problem more challenging. Instead of only focusing on densely sampled conditions, we investigate sparse-MVS with large baseline angles since sparser sampling is always more favorable inpractice. By investigating various observation sparsities, we show that the classical depth-fusion pipeline becomes powerless for thecase with larger baseline angle that worsens the photo-consistency check. As another line of solution, we present SurfaceNet+, a volumetric method to handle the 'incompleteness' and 'inaccuracy' problems induced by very sparse MVS setup. Specifically, the former problem is handled by a novel volume-wise view selection approach. It owns superiority in selecting valid views while discarding invalid occluded views by considering the geometric prior. Furthermore, the latter problem is handled via a multi-scale strategy that consequently refines the recovered geometry around the region with repeating pattern. The experiments demonstrate the tremendous performance gap between SurfaceNet+ and the state-of-the-art methods in terms of precision and recall. Under the extreme sparse-MVS settings in two datasets, where existing methods can only return very few points, SurfaceNet+ still works as well as in the dense MVS setting.

Latex Bibtex Citation:

@ARTICLE{ji2020surfacenet_plus,

title={SurfaceNet+: An End-to-end 3D Neural Network for Very Sparse Multi-view Stereopsis},

author={M. {Ji} and J. {Zhang} and Q. {Dai} and L. {Fang}},

journal={IEEE Transactions on Pattern Analysis and Machine Intelligence},

year={2020},

volume={},

number={},

pages={1-1},

}

X. Wang, X. Zhang, Y. Zhu, Y. Guo, X. Yuan, G. Ding, Q. Dai, D. Brady and L. Fang,

Proc. of Computer Vision and Pattern Recognition (CVPR), 2020.

We present PANDA, the first gigaPixel-level humAN-centric viDeo dAtaset, for large-scale, long-term, and multi-object visual analysis. The videos in PANDA were captured by a gigapixel camera and cover real-world scenes with both wide field-of-view (~1 square kilometer area) and high-resolution details (~gigapixel-level/frame). The scenes may contain 4k head counts with over 100× scale variation. PANDA provides enriched and hierarchical ground-truth annotations, including 15,974.6k bounding boxes, 111.8k fine-grained attribute labels, 12.7k trajectories, 2.2k groups and 2.9k interactions. We benchmark the human detection and tracking tasks. Due to the vast variance of pedestrian pose, scale, occlusion and trajectory, existing approaches are challenged by both accuracy and efficiency. Given the uniqueness of PANDA with both wide FoV and high resolution, a new task of interaction-aware group detection is introduced. We design a `global-to-local zoom-in' framework, where global trajectories and local interactions are simultaneously encoded, yielding promising results. We believe PANDA will contribute to the community of artificial intelligence and praxeology by understanding human behaviors and interactions in large-scale real-world scenes. PANDA Website: http://www.panda-dataset.com.

Latex Bibtex Citation:

@INPROCEEDINGS{9156646,

author={X. {Wang} and X. {Zhang} and Y. {Zhu} and Y. {Guo} and X. {Yuan} and L. {Xiang} and Z. {Wang} and G. {Ding} and D. {Brady} and Q. {Dai} and L. {Fang}},

booktitle={2020 IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR)},

title={PANDA: A Gigapixel-Level Human-Centric Video Dataset},

year={2020},

volume={},

number={},

pages={3265-3275},

doi={10.1109/CVPR42600.2020.00333}}

}