Multi-camera Imaging

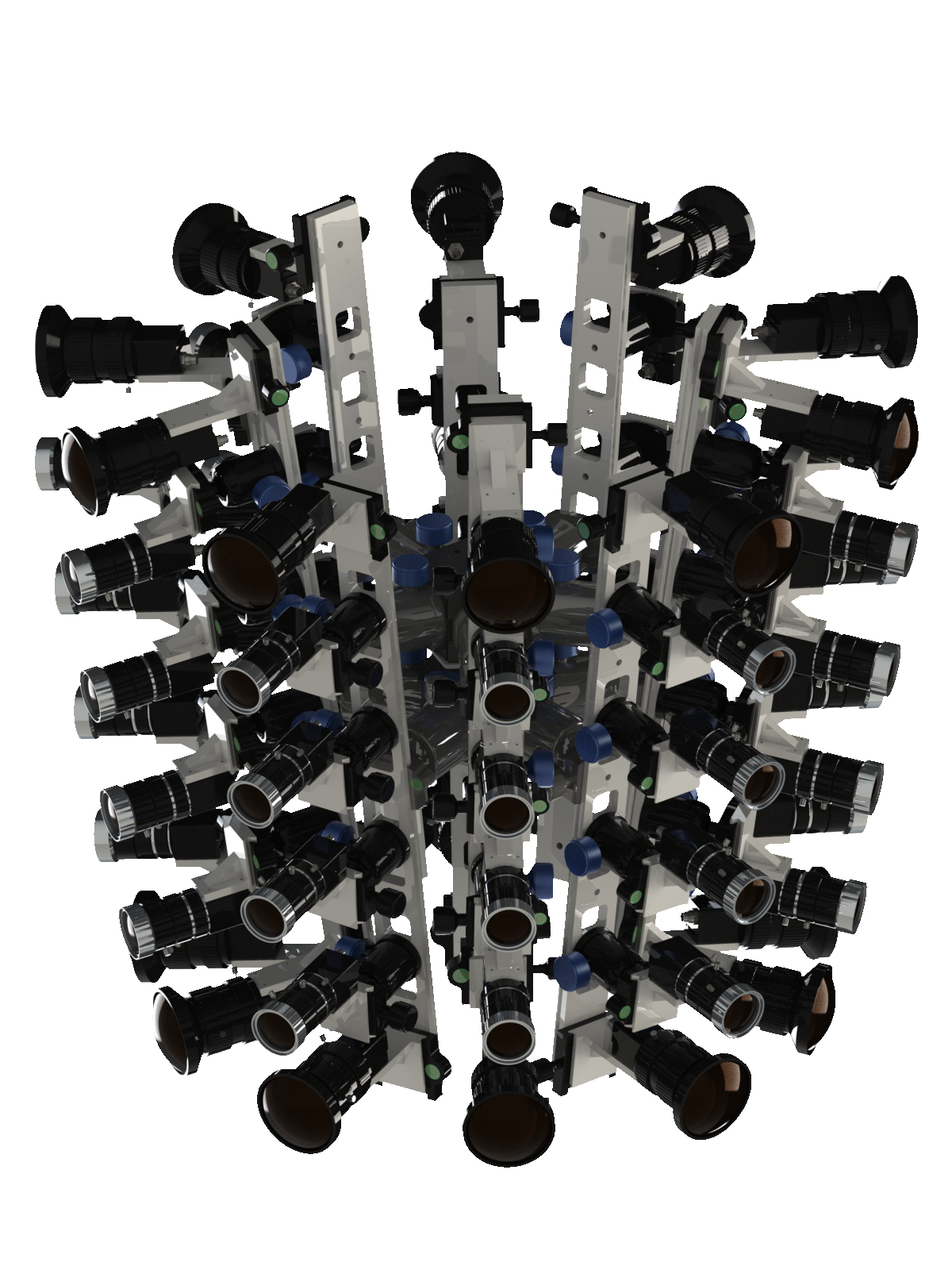

Multi-camera imaging uses a large number of inexpensive cameras to create virtual cameras that outperform real ones. Multi-camera systems can function in many ways by changing the arrangement and aiming of the cameras. We developed an unstructured gigapixel array camera (UnstructuredCam), beyond the resolution of a single camera and human visual perception, which aims to capture the large-scale dynamic scene with both wide-FoV and high-resolution. It can also be extended for large-scale 3d videography, enabling an unprecedented VR experience. We built the PANDA gigapixel dataset using our array camera to promote the study of large-scale, long-range, multi-target visual analysis centered on human behavior.

Paper

X. Yuan, M. Ji, J. Wu, D. Brady, Q. Dai and L. Fang,

Light: Science & Applications, volume 10, Article number: 37 (2021). (cover article)

Array cameras removed the optical limitations of a single camera and paved the way for high-performance imaging via the combination of micro-cameras and computation to fuse multiple aperture images. However, existing solutions use dense arrays of cameras that require laborious calibration and lack flexibility and practicality. Inspired by the cognition function principle of the human brain, we develop an unstructured array camera system that adopts a hierarchical modular design with multiscale hybrid cameras composing different modules. Intelligent computations are designed to collaboratively operate along both intra- and intermodule pathways. This system can adaptively allocate imagery resources to dramatically reduce the hardware cost and possesses unprecedented flexibility, robustness, and versatility. Large scenes of real-world data were acquired to perform human-centric studies for the assessment of human behaviours at the individual level and crowd behaviours at the population level requiring high-resolution long-term monitoring of dynamic wide-area scenes.

Latex Bibtex Citation:

@article{yuan2021modular,

title={A modular hierarchical array camera},

author={Yuan, Xiaoyun and Ji, Mengqi and Wu, Jiamin and Brady, David J and Dai, Qionghai and Fang, Lu},

journal={Light: Science \& Applications},

volume={10},

number={1},

pages={1--9},

year={2021},

publisher={Nature Publishing Group}

}

Y. Tang, H. Zheng, Y. Zhu, X. Yuan, X. Lin, D. Brady and L. Fang,

IEEE Trans. on Pattern Analysis and Machine Intelligence (TPAMI), 2020.

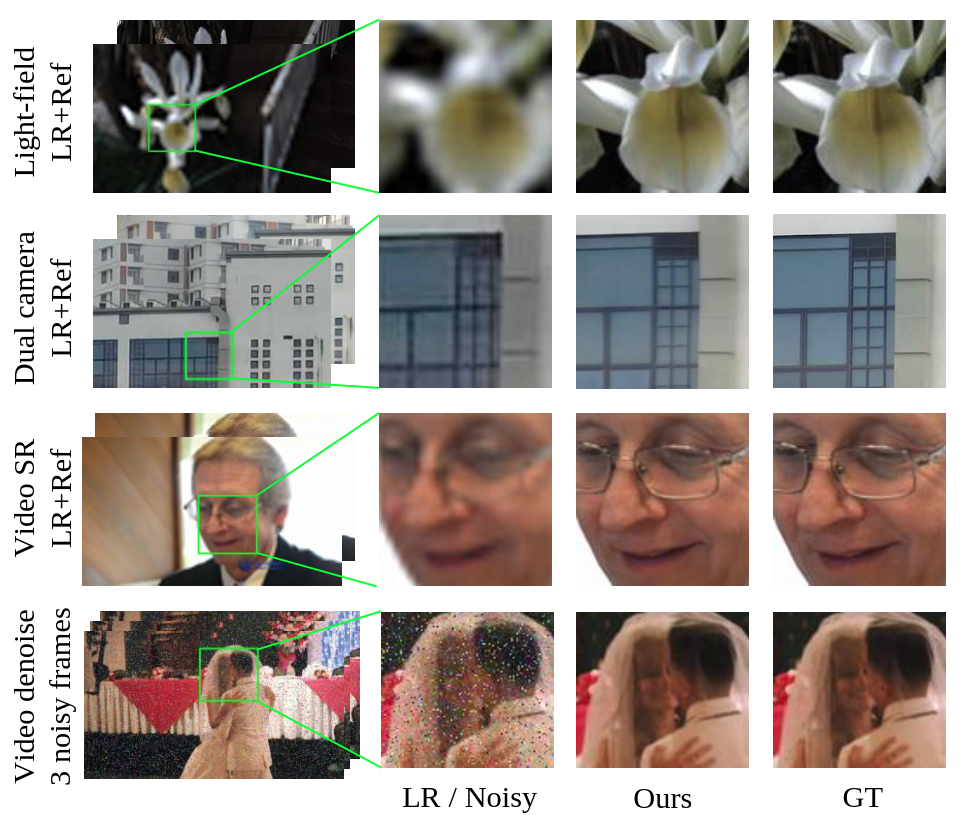

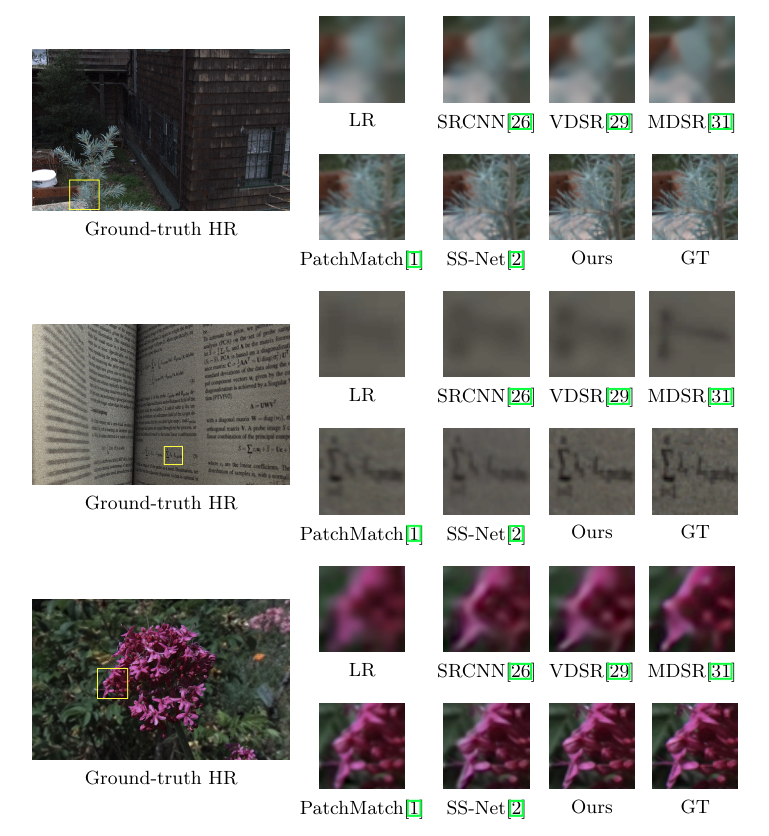

The ability of camera arrays to efficiently capture higher space-bandwidth product than single cameras has led to various multiscale and hybrid systems. These systems play vital roles in computational photography, including light field imaging, 360 VR camera, gigapixel videography, etc. One of the critical tasks in multiscale hybrid imaging is matching and fusing cross-resolution images from different cameras under perspective parallax. In this paper, we investigate the reference-based super-resolution (RefSR) problem associated with dual-camera or multi-camera systems, with a significant resolution gap (8x) and large parallax (10%pixel displacement). We present CrossNet++, an end-to-end network containing novel two-stage cross-scale warping modules. The stage I learns to narrow down the parallax distinctively with the strong guidance of landmarks and intensity distribution consensus. Then the stage II operates more fine-grained alignment and aggregation in feature domain to synthesize the final super-resolved image. To further address the large parallax, new hybrid loss functions comprising warping loss, landmark loss and super-resolution loss are proposed to regularize training and enable better convergence. CrossNet++ significantly outperforms the state-of-art on light field datasets as well as real dual-camera data. We further demonstrate the generalization of our framework by transferring it to video super-resolution and video denoising.

Latex Bibtex Citation:

@ARTICLE{9099445,

author={Y. {Tan} and H. {Zheng} and Y. {Zhu} and X. {Yuan} and X. {Lin} and D. {Brady} and L. {Fang}},

journal={IEEE Transactions on Pattern Analysis and Machine Intelligence},

title={CrossNet++: Cross-scale Large-parallax Warping for Reference-based Super-resolution},

year={2020},

volume={},

number={},

pages={1-1},

doi={10.1109/TPAMI.2020.2997007}}

X. Wang, X. Zhang, Y. Zhu, Y. Guo, X. Yuan, G. Ding, Q. Dai, D. Brady and L. Fang,

Proc. of Computer Vision and Pattern Recognition (CVPR), 2020.

We present PANDA, the first gigaPixel-level humAN-centric viDeo dAtaset, for large-scale, long-term, and multi-object visual analysis. The videos in PANDA were captured by a gigapixel camera and cover real-world scenes with both wide field-of-view (~1 square kilometer area) and high-resolution details (~gigapixel-level/frame). The scenes may contain 4k head counts with over 100× scale variation. PANDA provides enriched and hierarchical ground-truth annotations, including 15,974.6k bounding boxes, 111.8k fine-grained attribute labels, 12.7k trajectories, 2.2k groups and 2.9k interactions. We benchmark the human detection and tracking tasks. Due to the vast variance of pedestrian pose, scale, occlusion and trajectory, existing approaches are challenged by both accuracy and efficiency. Given the uniqueness of PANDA with both wide FoV and high resolution, a new task of interaction-aware group detection is introduced. We design a `global-to-local zoom-in' framework, where global trajectories and local interactions are simultaneously encoded, yielding promising results. We believe PANDA will contribute to the community of artificial intelligence and praxeology by understanding human behaviors and interactions in large-scale real-world scenes. PANDA Website: http://www.panda-dataset.com.

Latex Bibtex Citation:

@INPROCEEDINGS{9156646,

author={X. {Wang} and X. {Zhang} and Y. {Zhu} and Y. {Guo} and X. {Yuan} and L. {Xiang} and Z. {Wang} and G. {Ding} and D. {Brady} and Q. {Dai} and L. {Fang}},

booktitle={2020 IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR)},

title={PANDA: A Gigapixel-Level Human-Centric Video Dataset},

year={2020},

volume={},

number={},

pages={3265-3275},

doi={10.1109/CVPR42600.2020.00333}}

}

J. Zhang, T. Zhu, A. Zhang, Z. Wang, X. Yuan, Q. Dai and L. Fang,

Proc. of Int. Conf. on Computational Photography (ICCP),2020. (Oral)

Creating virtual reality (VR) content with effective imaging systems has attracted significant attention worldwide following the broad applications of VR in various fields, including entertainment, surveillance, sports, etc. However, due to the inherent trade-off between field-of-view and resolution of the imaging system as well as the prohibitive computational cost, live capturing and generating multiscale 360° 3D video content at an eye-limited resolution to provide immersive VR experiences confront significant challenges. In this work, we propose Multiscale-VR, a multiscale unstructured camera array computational imaging system for high-quality gigapixel 3D panoramic videography that creates the six-degree-of-freedom multiscale interactive VR content. The Multiscale-VR imaging system comprises scalable cylindrical-distributed global and local cameras, where global stereo cameras are stitched to cover 360° field-of-view, and unstructured local monocular cameras are adapted to the global camera for flexible high-resolution video streaming arrangement. We demonstrate that a high-quality gigapixel depth video can be faithfully reconstructed by our deep neural network-based algorithm pipeline where the global depth via stereo matching and the local depth via high-resolution RGB-guided refinement are associated. To generate the immersive 3D VR content, we present a three-layer rendering framework that includes an original layer for scene rendering, a diffusion layer for handling occlusion regions, and a dynamic layer for efficient dynamic foreground rendering. Our multiscale reconstruction architecture enables the proposed prototype system for rendering highly effective 3D, 360° gigapixel live VR video at 30 fps from the captured high-throughput multiscale video sequences. The proposed multiscale interactive VR content generation approach by using a heterogeneous camera system design, in contrast to the existing single-scale VR imaging systems with structured homogeneous cameras, will open up new avenues of research in VR and provide an unprecedented immersive experience benefiting various novel applications.

Latex Bibtex Citation:

@INPROCEEDINGS{9105244,

author={J. {Zhang} and T. {Zhu} and A. {Zhang} and X. {Yuan} and Z. {Wang} and S. {Beetschen} and L. {Xu} and X. {Lin} and Q. {Dai} and L. {Fang}},

booktitle={2020 IEEE International Conference on Computational Photography (ICCP)},

title={Multiscale-VR: Multiscale Gigapixel 3D Panoramic Videography for Virtual Reality},

year={2020},

volume={},

number={},

pages={1-12},

doi={10.1109/ICCP48838.2020.9105244}}

H. Zheng, M. Ji, H. Wang, Y. Liu and L. Fang,

Proc. of European conference on computer vision (ECCV), 2018.

The Reference-based Super-resolution (RefSR) super-resolves a low-resolution (LR) image given an external high-resolution (HR) reference image, where the reference image and LR image share similar viewpoint but with significant resolution gap x8. Existing RefSR methods work in a cascaded way such as patch matching followed by synthesis pipeline with two independently defined objective functions, leading to the inter-patch misalignment, grid effect and inefficient optimization. To resolve these issues, we present CrossNet, an end-to-end and fully-convolutional deep neural network using cross-scale warping. Our network contains image encoders, cross-scale warping layers, and fusion decoder: the encoder serves to extract multi-scale features from both the LR and the reference images; the cross-scale warping layers spatially aligns the reference feature map with the LR feature map; the decoder finally aggregates feature maps from both domains to synthesize the HR output. Using cross-scale warping, our network is able to perform spatial alignment at pixel-level in an end-to-end fashion, which improves the existing schemes both in precision (around 2dB-4dB) and efficiency (more than 100 times faster).

Latex Bibtex Citation:

@inproceedings{zheng2018crossnet,

title={CrossNet: An End-to-end Reference-based Super Resolution Network using Cross-scale Warping},

author={Zheng, Haitian and Ji, Mengqi and Wang, Haoqian and Liu, Yebin and Fang, Lu},

booktitle={Proceedings of the European Conference on Computer Vision (ECCV)},

pages={88--104},

year={2018}

}

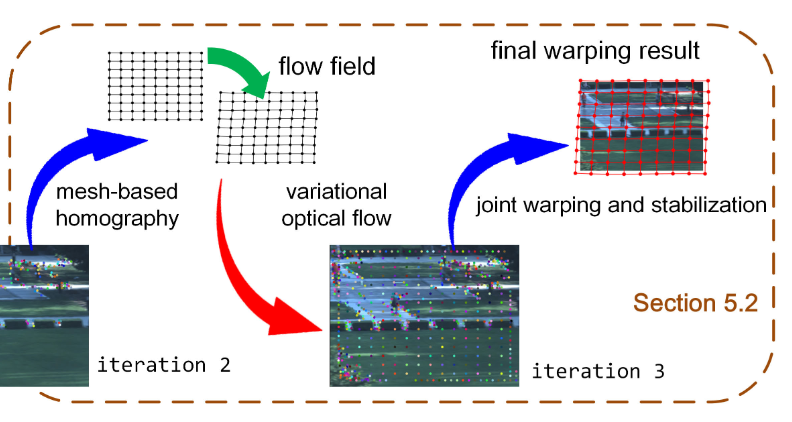

X. Yuan, L. Fang, D. Brady, Y. Liu and Q. Dai,

Proc. of IEEE International Conference on Computational Photography (ICCP), May 2017.(Oral)

We present a multi-scale camera array to capture and synthesize gigapixel videos in an efficient way. Our acquisition setup contains a reference camera with a short-focus lens to get a large field-of-view video and a number of unstructured long-focus cameras to capture local-view details. Based on this new design, we propose an iterative feature matching and image warping method to independently warp each local-view video to the reference video. The key feature of the proposed algorithm is its robustness to and high accuracy for the huge resolution gap (more than 8x resolution gap between the reference and the local-view videos), camera parallaxes, complex scene appearances and color inconsistency among cameras. Experimental results show that the proposed multi-scale camera array and cross resolution video warping scheme is capable of generating seamless gigapixel video without the need of camera calibration and large overlapping area constraints between the local-view cameras.

Latex Bibtex Citation:

@INPROCEEDINGS{7951481, author={X. {Yuan} and L. {Fang} and Q. {Dai} and D. J. {Brady} and Y. {Liu}}, booktitle={2017 IEEE International Conference on Computational Photography (ICCP)}, title={Multiscale gigapixel video: A cross resolution image matching and warping approach}, year={2017}, volume={}, number={}, pages={1-9}, doi={10.1109/ICCPHOT.2017.7951481}}